Research Material 2018

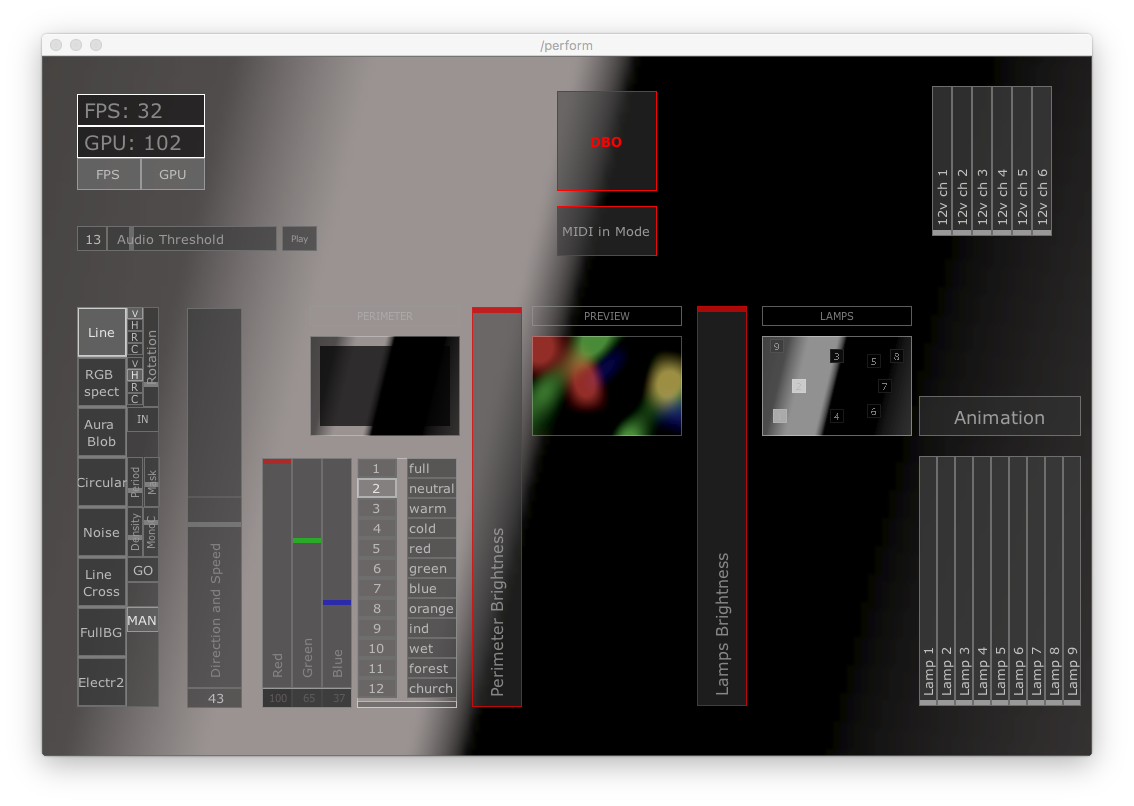

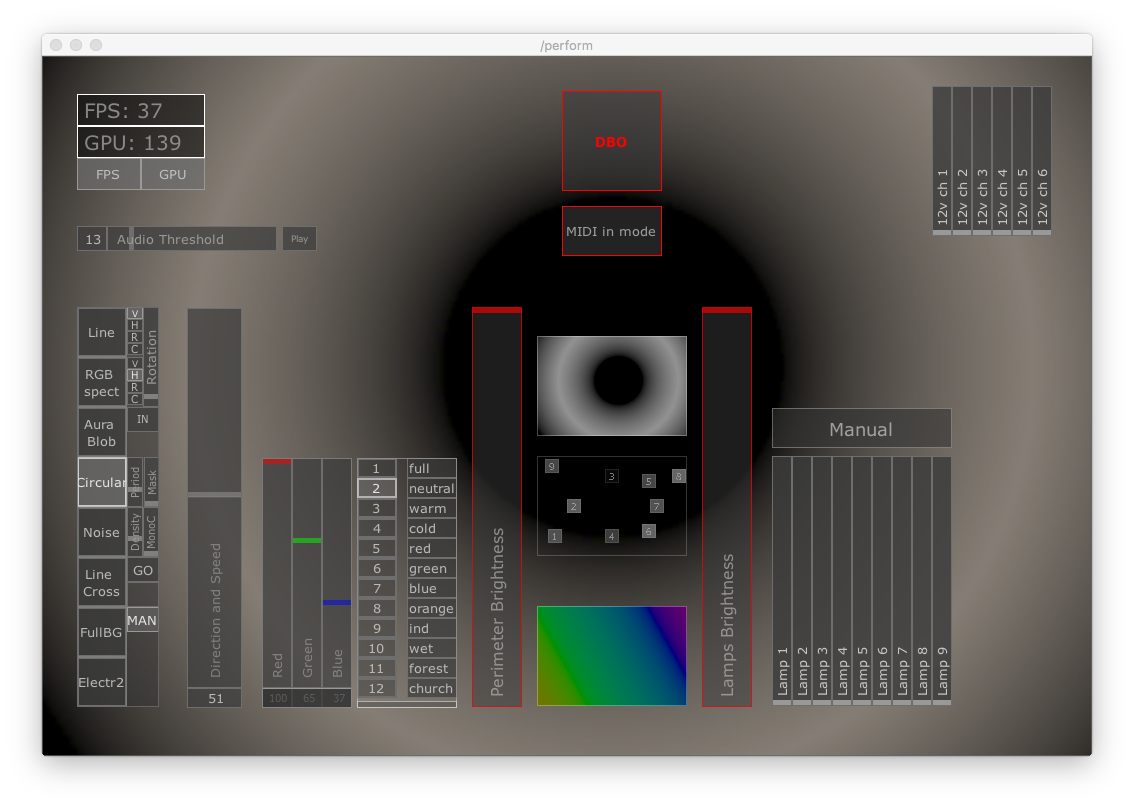

Interface for Live Control and Manipulation of Lighting Clusters

These are screenshots of an interface for a software I have developed to control clusters of lighting and other scenic effects.

This custom made software generates live video content, which is then mapped to lighting fixtures across the stage. producing unique effects with great dynamic range; from on-your-face digital type-of-look, all the way to subtle, minimal and organic.

A version of this software was then used and integrated in the lighting design of a production called Obscene Madame D (2018).

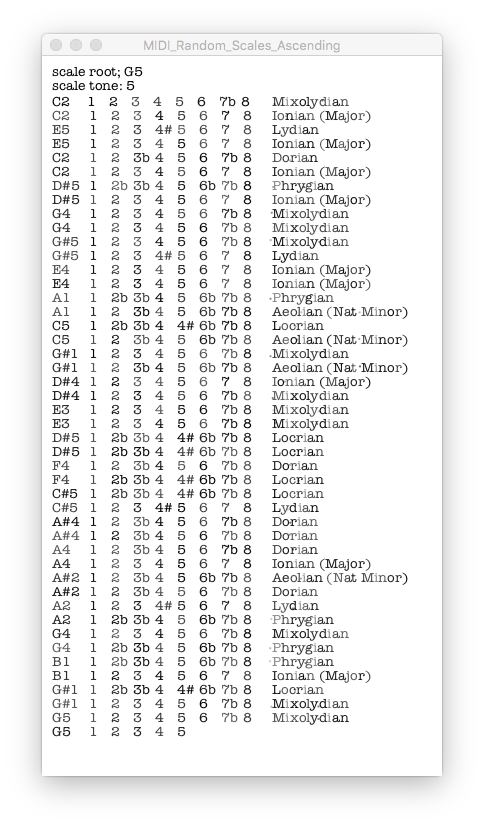

Ear Training App

This is a screenshot for an app I have developed for personal use. This app generates and play notes in different major scale modes, changing root note every two rounds, or anytime desired.

The purpose of this app is ear training and scales recognition for musicians. This app can be connected to any digital work station (like Ableton Live, Logic etc..) or any physical MIDI instrument (like keyboards, synth and so on ...)

This interface was intentionally kept to minimal, and similar to a typewriter document, with letters typed with uneven pressure, and some ink traces. The main scope of this app is focussed around the sound that it generates, and having a specific or over-refined look became secondary.

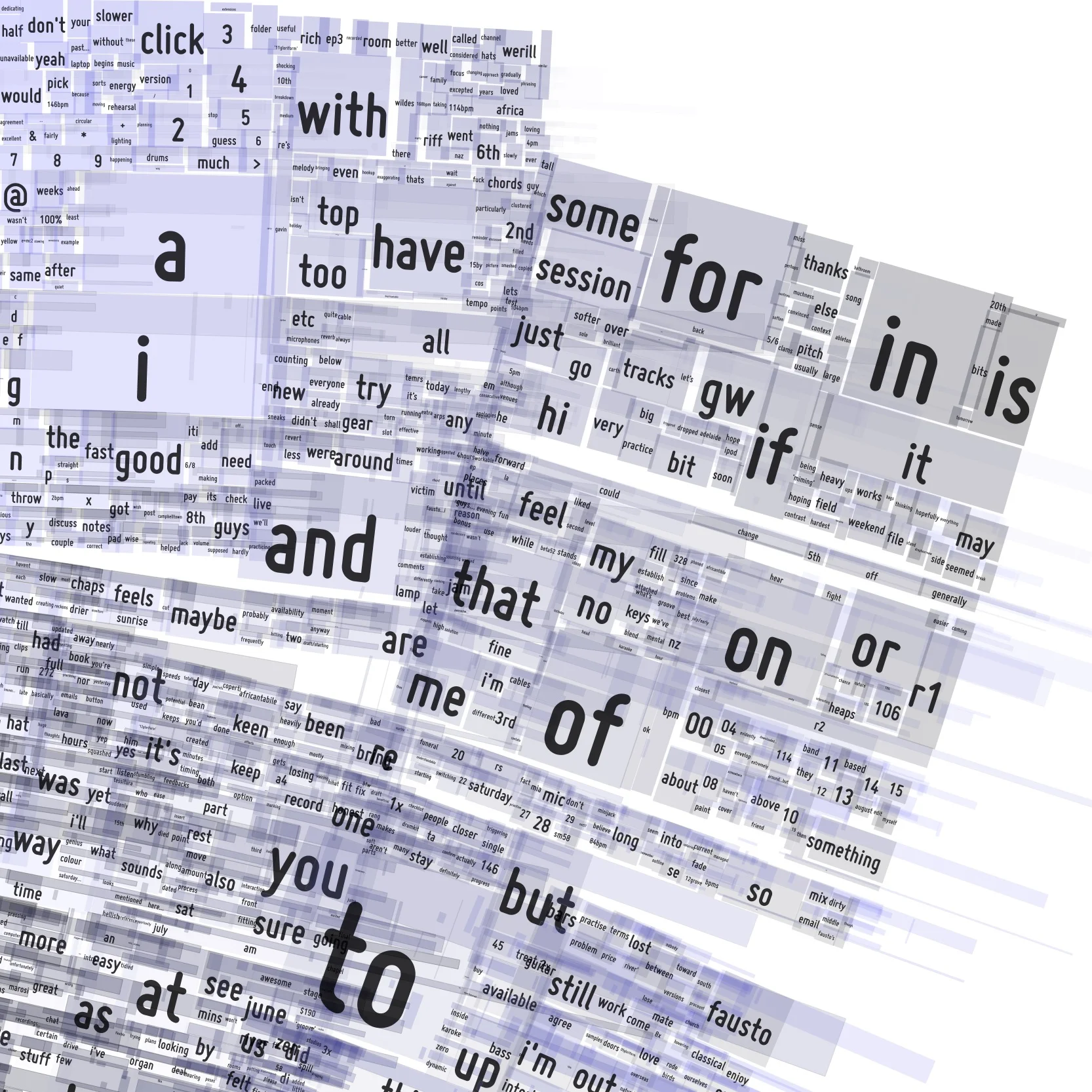

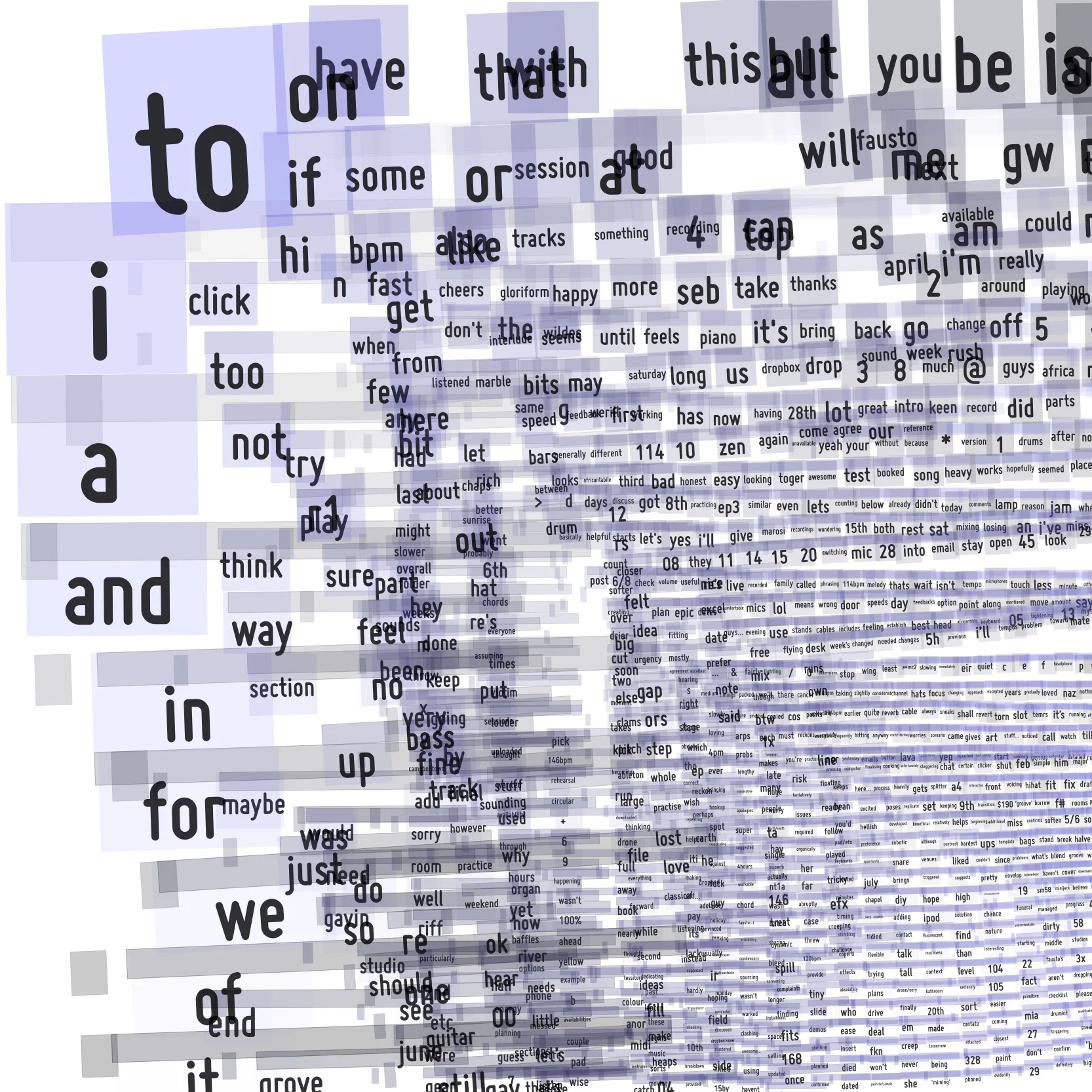

Prototypes ideas for a CD cover

The content of these compositions is generated by the words used in emails exchanged by the band members. The more recurrent a word is, the larger the rectangle containing that word is.

Modern music bands workflow often revolves around digital platforms, like shared Dropbox folders, Google Drive spreadsheets, shared calendars, and often relies on emails for both creative and production purposes. These digital platforms can become vital tools, and an integral part of the band creative process.

Further refinements to this idea will include a code implementation to omit certain prepositions and articles, in order to let more significant words to come to the surface.

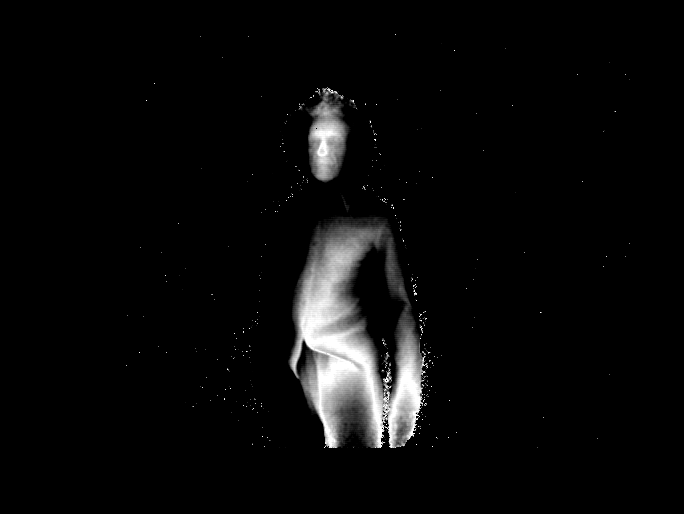

Depth Camera

As part of my research, I have started investigating different uses and potentiality of a depth camera.

The depth camera is a device that is able to read bodies or objects, in relation to the physical position they occupy, regardless of the amount or type of light used on the subject itself.

For this research I have designed an app that can receive depth data from the camera, and manipulate in real time several key parameters such as shifting the distance of the depth to be read, and altering the depth of field itself.

The result is an highly engaging and inspiring platform for the performer, offering a unique and captivating aesthetic.