Research

Research is an essential element in Fausto’s practice.

Amplified and driven by the use of Creative Coding, his research is centred on prototyping and aimed to test visual ideas.

It becomes a creative tool, that provides tangible starting points to develop artistic solutions, explore concepts that will nurture and become integrated into broader designing projects.

Fausto's designs and research include also custom made objects and lighting, tailored to meet specific aesthetics and production needs, driving the director work and set design towards an evocative and unique space.

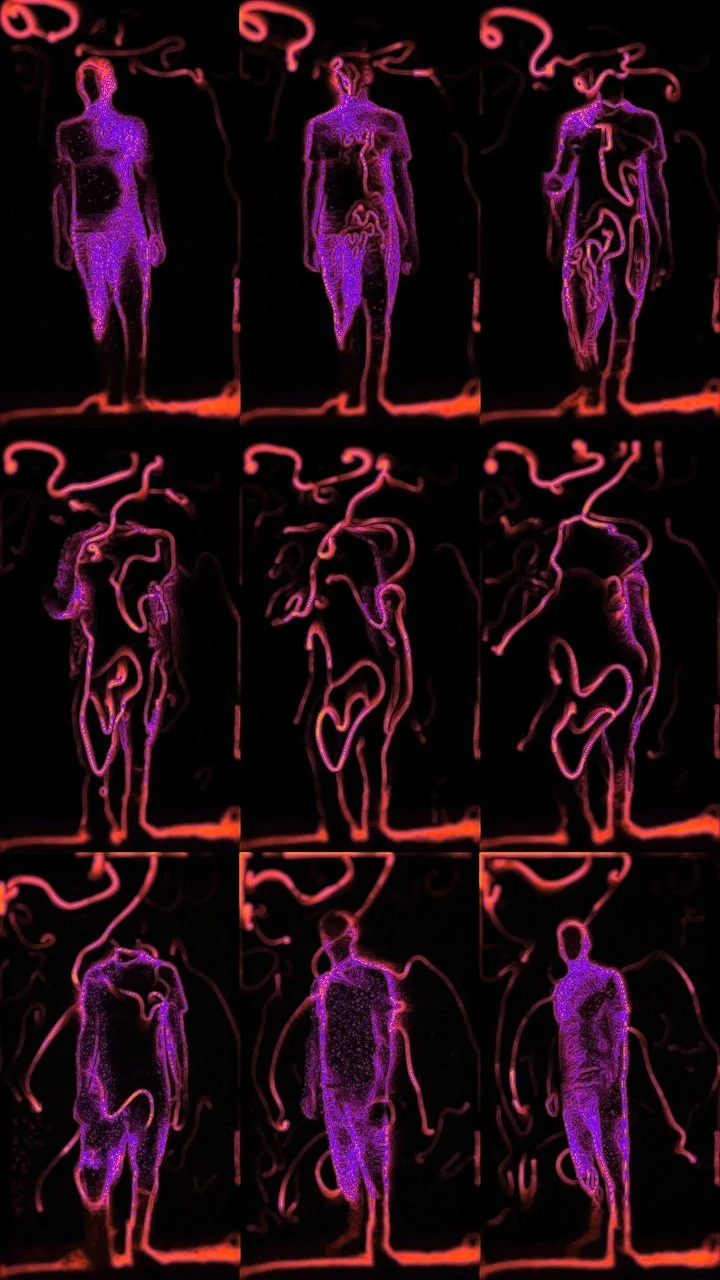

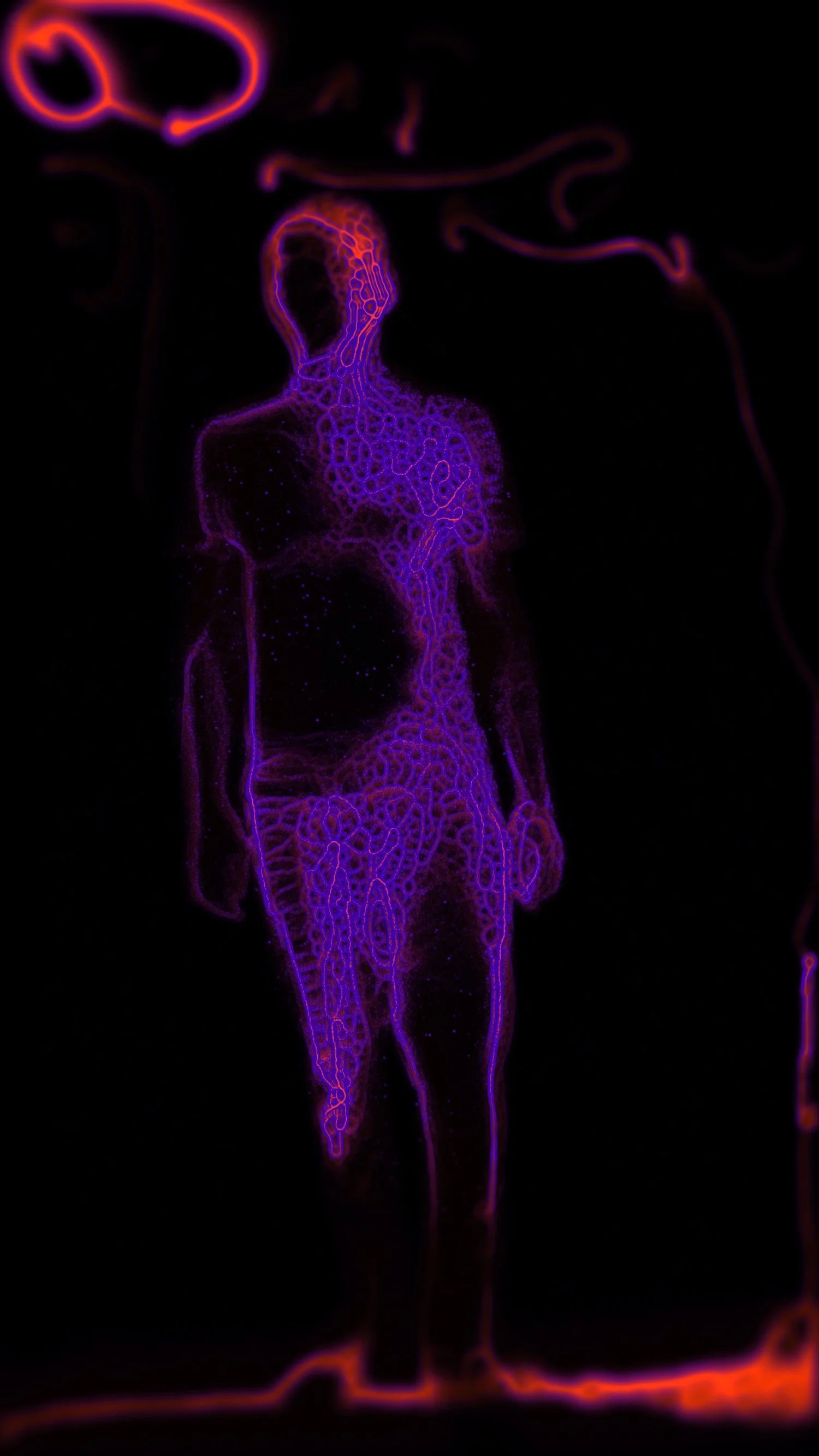

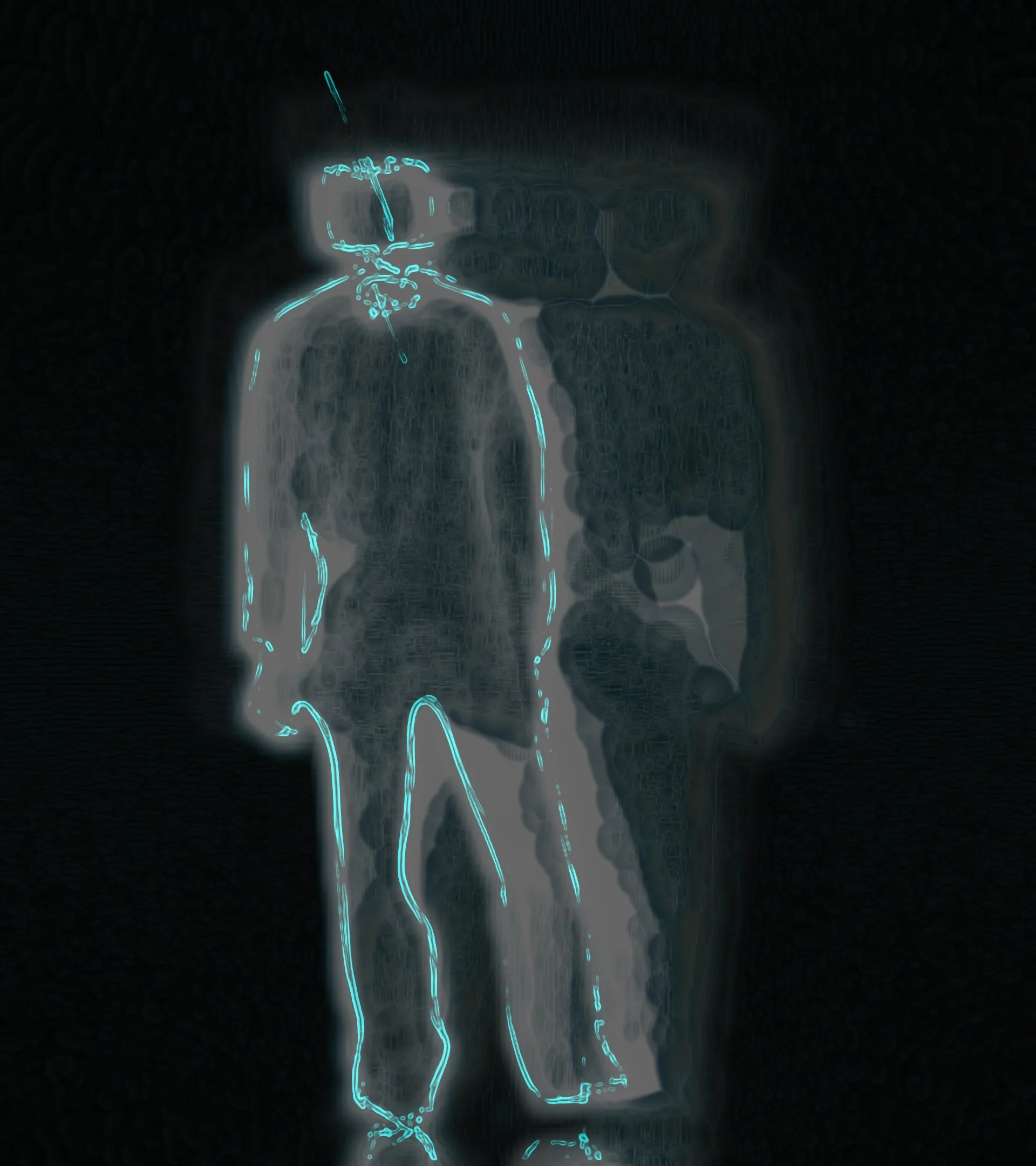

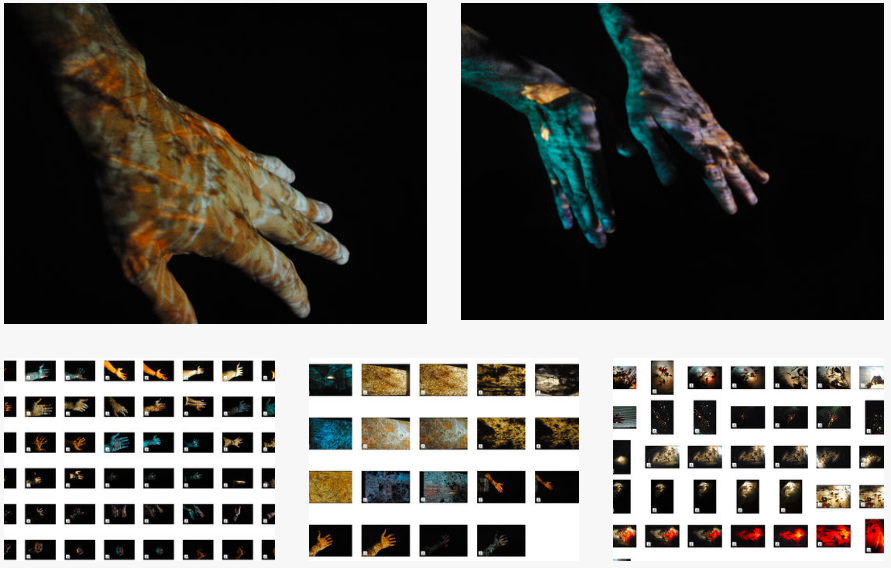

Depth Cam RnD (2025)

This research started in 2016 and still going!

This is where I am at currently

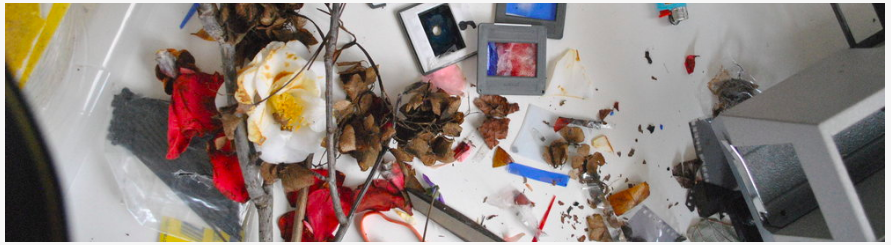

Custom Interior Lighting (2024)

I have been collecting, fixing, modding, assembling lights for a very long time., starting as a teenager making lights for my bedroom, and going to my local shop in my tiny town to buy different light globes. I remember distinctly walking back home, in a sort of hurry, to go to my room and do my tests.

Over the years, this passion stayed with me, and accompanied me, growing , changing and evolving as I naturally was.

Recently this passion sparked again, stronger than ever, and have decided to intentionally set aside some time to building and perfecting this craft.

This is a prototype, made primarily with reclaimed and second hand materials, repurposed, reinvented, modded, enhanced, reassembled in this current shape

Using LED lighting (2024)

LED lighting got a really bad reputation when they first hit the market about 15 something years ago. Lighting Designers were very reluctant from using LED fixtures for a very long time, and for very good reasons… low output, poor colors, poor colors rendering, unusable beams…

Technology has evolved a lot in that direction over the last decade, and LED lights have become essential tools in a lighting designer list of ingredients, as well as on TV and filming sets… Perfectly balanced white, realistic skin tones, saturated colors, sudden change of color or snapping to full brightness or full blackout in a fraction of a second… LED lighting has a huge repertoire to offer, and use cases.. just make sure to buy or hire the good quality ones!!!

Here below a still from the Assembly by Raghav Handa, photo by James P Brown, lighting by Fausto Brusamolino

Experiment with Real Time filming techniques (2024)

here some stills from a recent filming sessions. Custom depth sensors and motion detection techniques, used in real time to inform the creative process

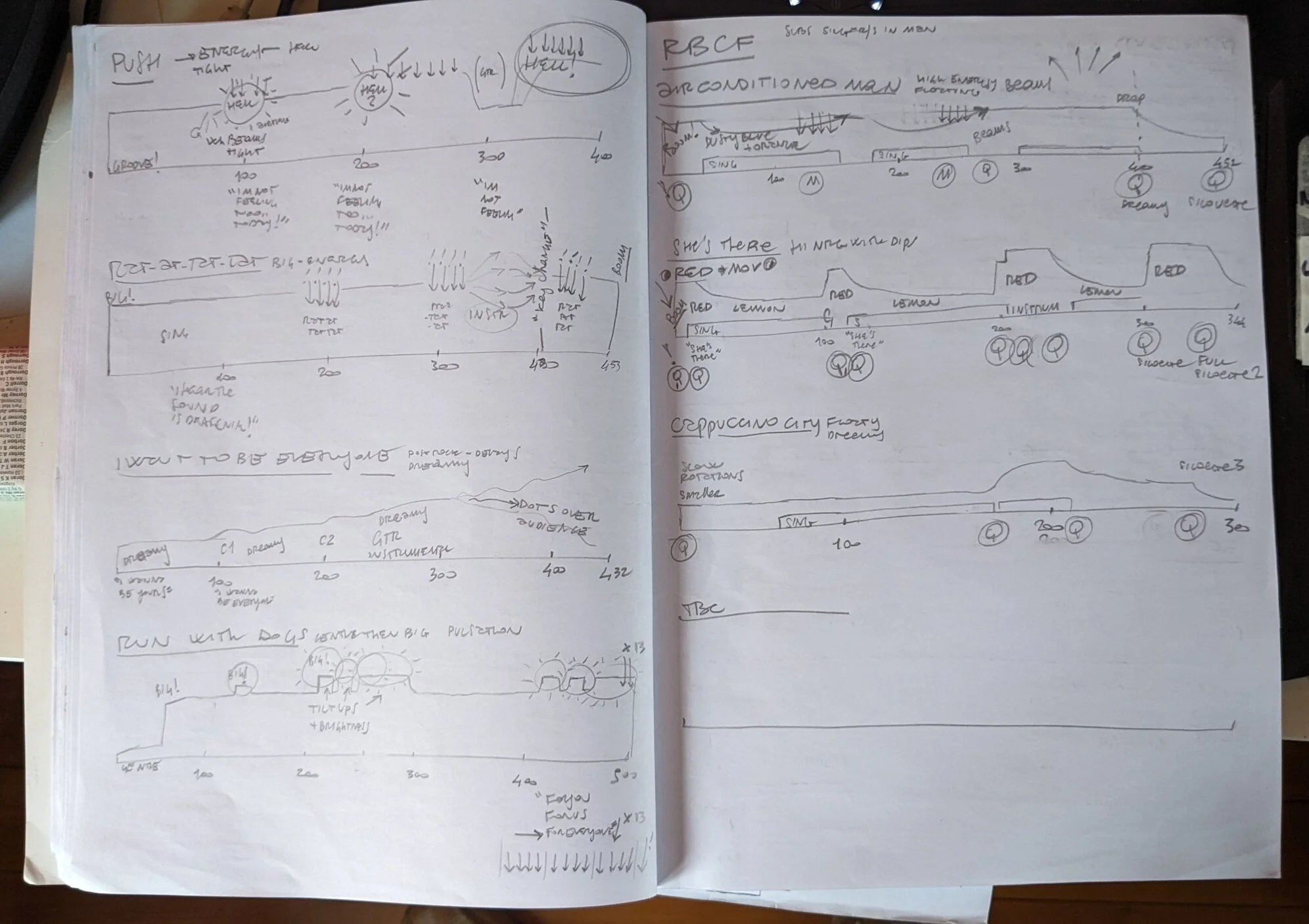

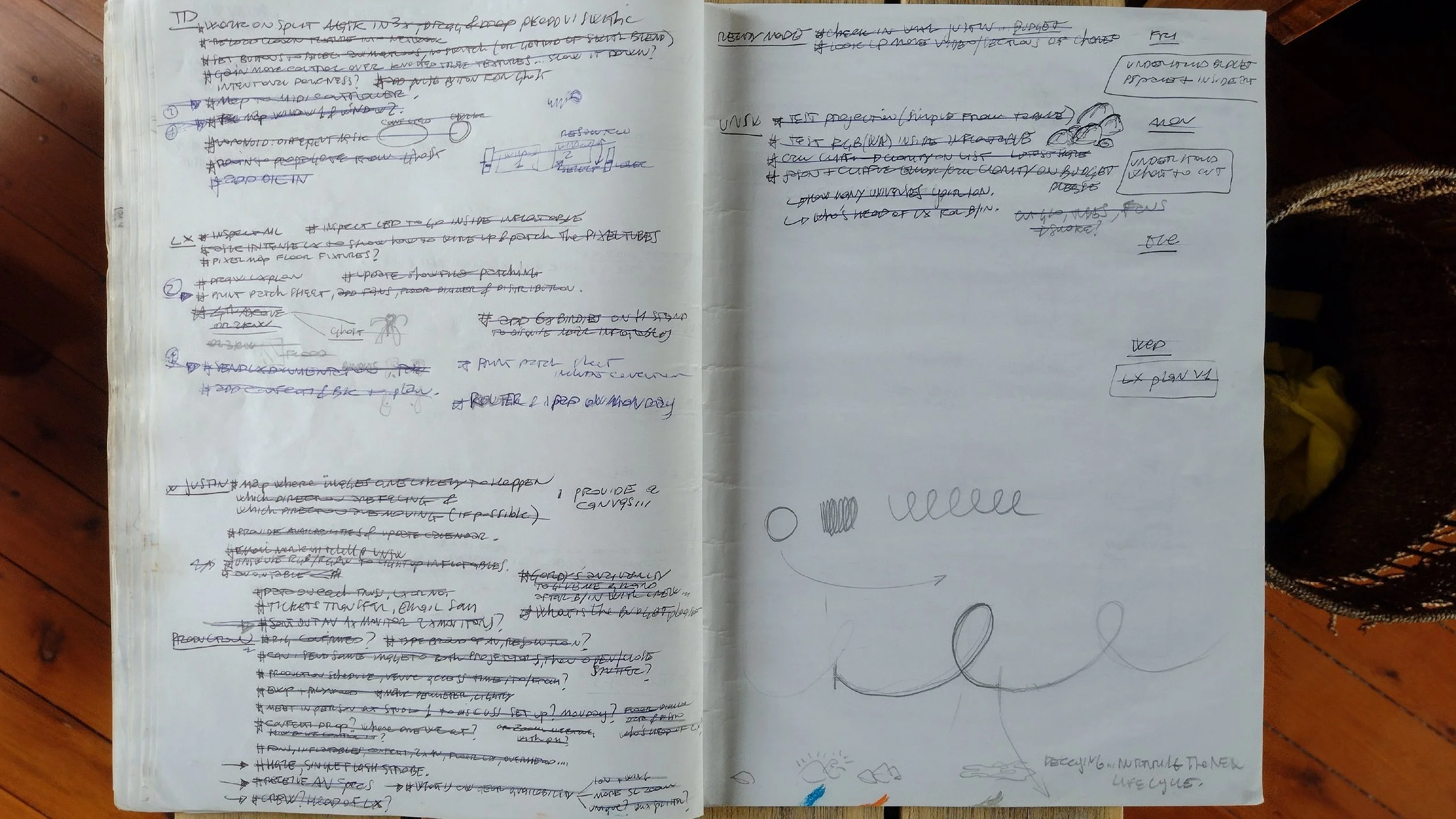

Mapping Mapping Mapping, Live Live Live (2023)

Sketching on timelines to prepare for an upcoming series of live music shows.

These are first impression sketches that help me navigating the structure of each song, to start collecting some ideas as they come, getting a general sense of each song’s flow, its intensity, and dynamic range.

These preliminary sketches are then converted into lighting state, recorded into lighting scene, as well as operated manually during the performance itself

These pencil lines, words, numbers, mountains and valleys, eventually convert into designs for a live show

Staring Straight into the Sun (2023)

Excerpt for a sound and visual installation devised in collaboration with Artist and composer James Peter Brown

Staring Straight into the Sun. 3D render

Research on visual project Flow (2023)

Often I find myself returning to this, contemplating, learning, absorbing, tweaking and then walking away.

Researching seamless organic movement, color combinations, shapes, layouts and composition

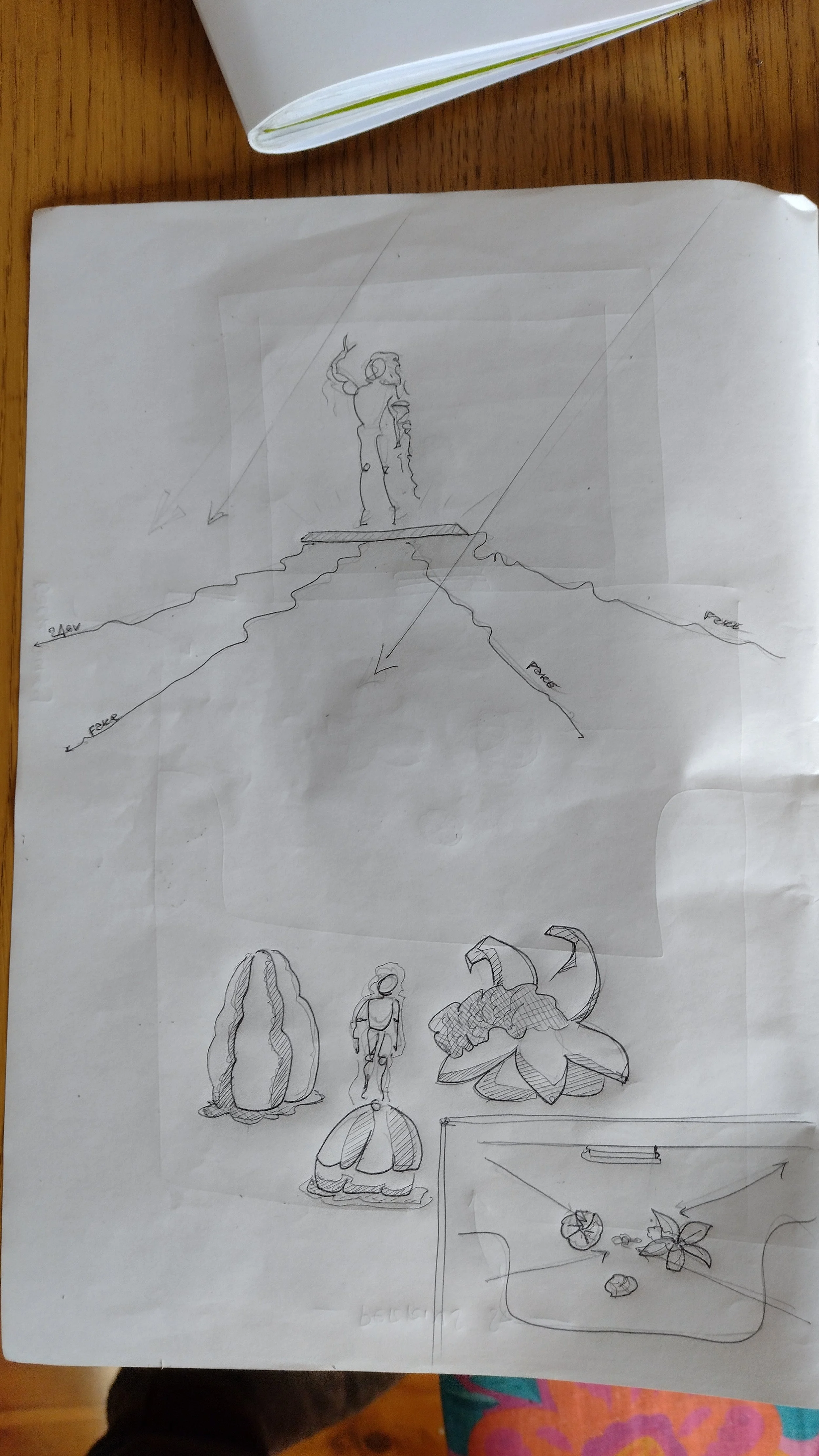

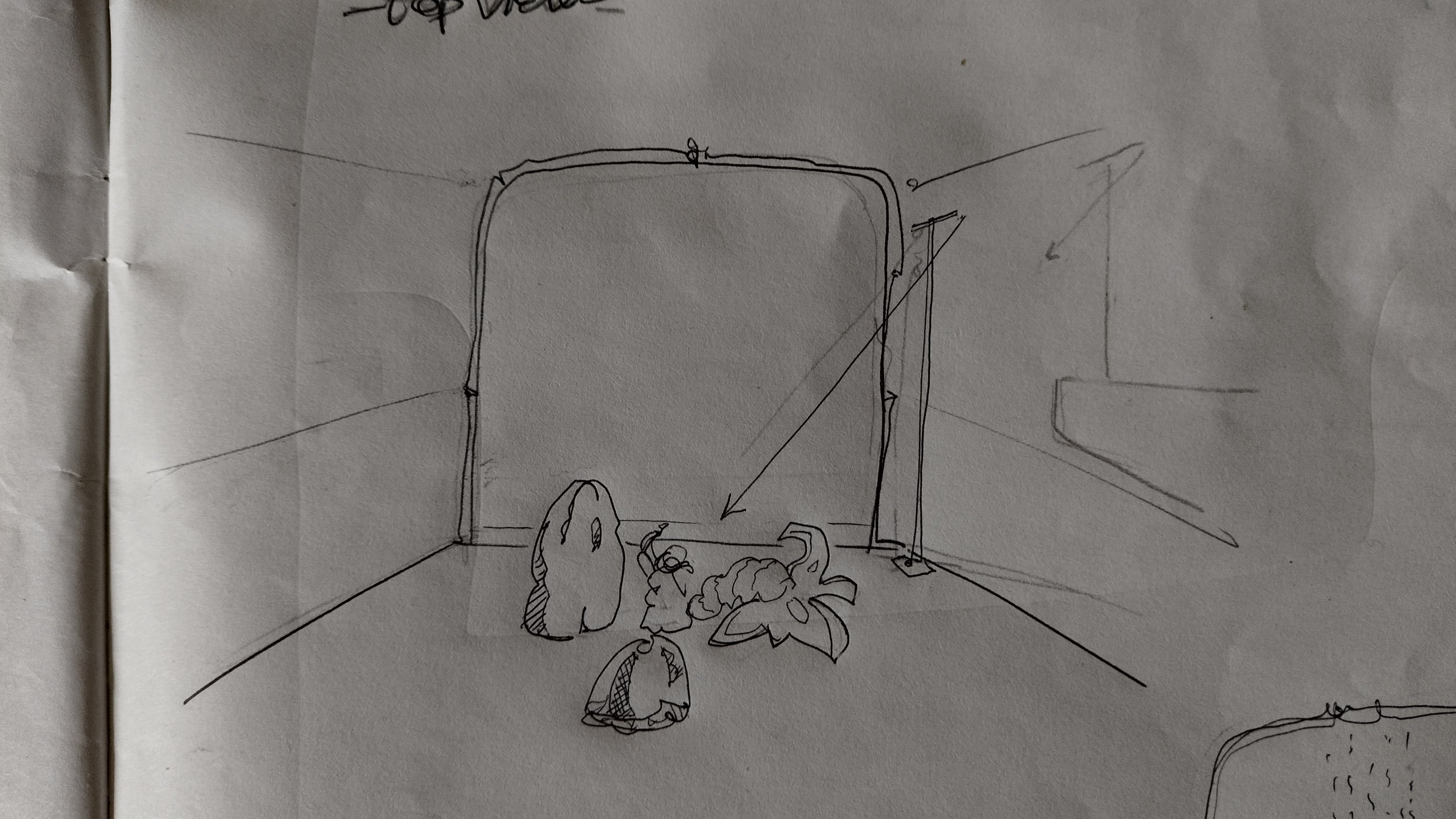

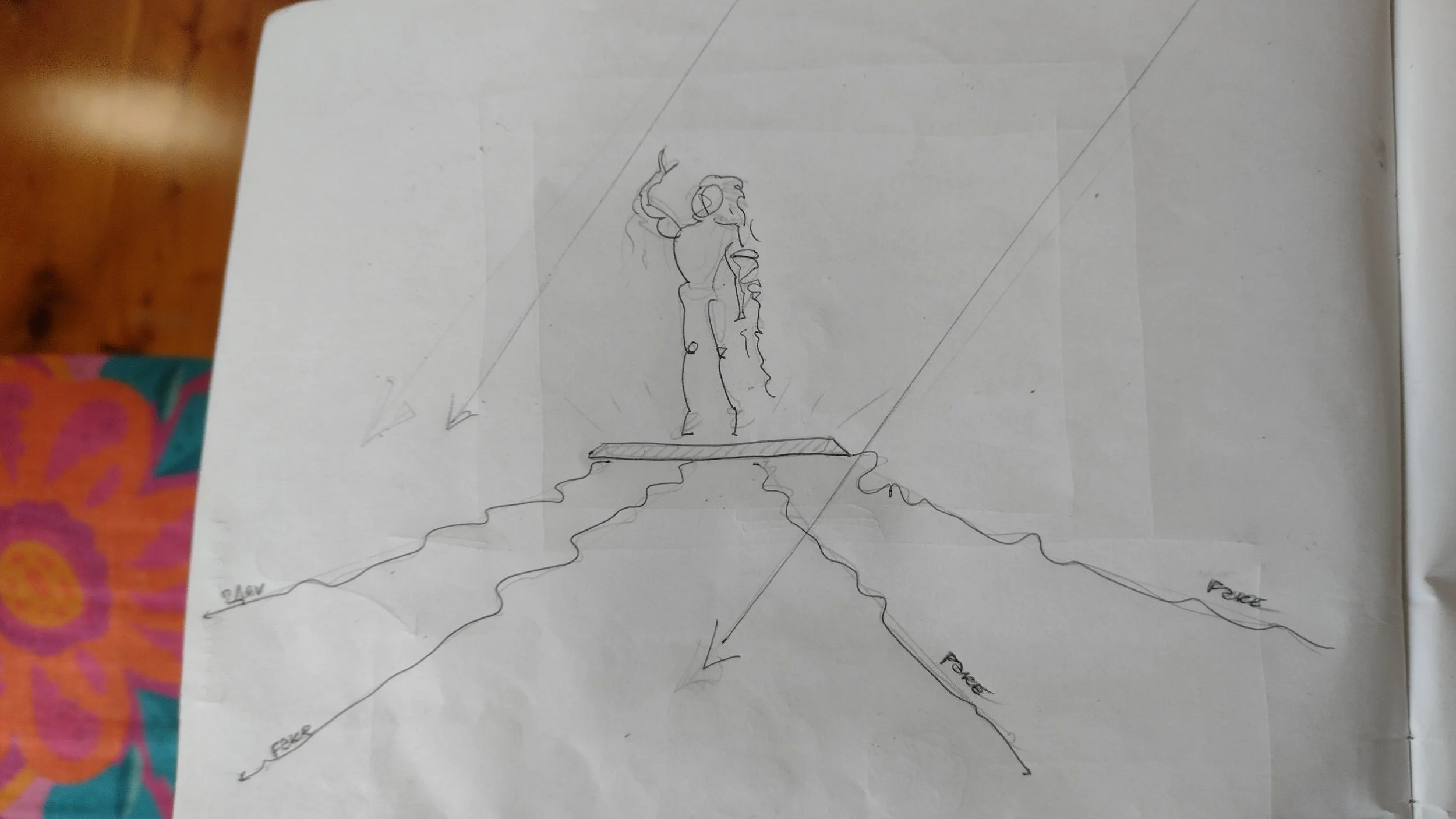

The value of sketching with pan and paper in 2023 (2023)

Deeply immersed in a workflow made of file sharing, detailed 3D drawings and realistic renders, I still enjoy and incorporate pen and paper in my creative workflow.

Below a handful of preliminary sketches, aimed to boiling down the design concepts and ideas to their most essential features.

Research (2022)

Exploring depth of field and parallel image casting in real time.

In these screenshots, the presence of a human body is detected and manipulated in real time. Questioning what the machine can see, what we can see, what interpretations are we giving to the world surrounding us.

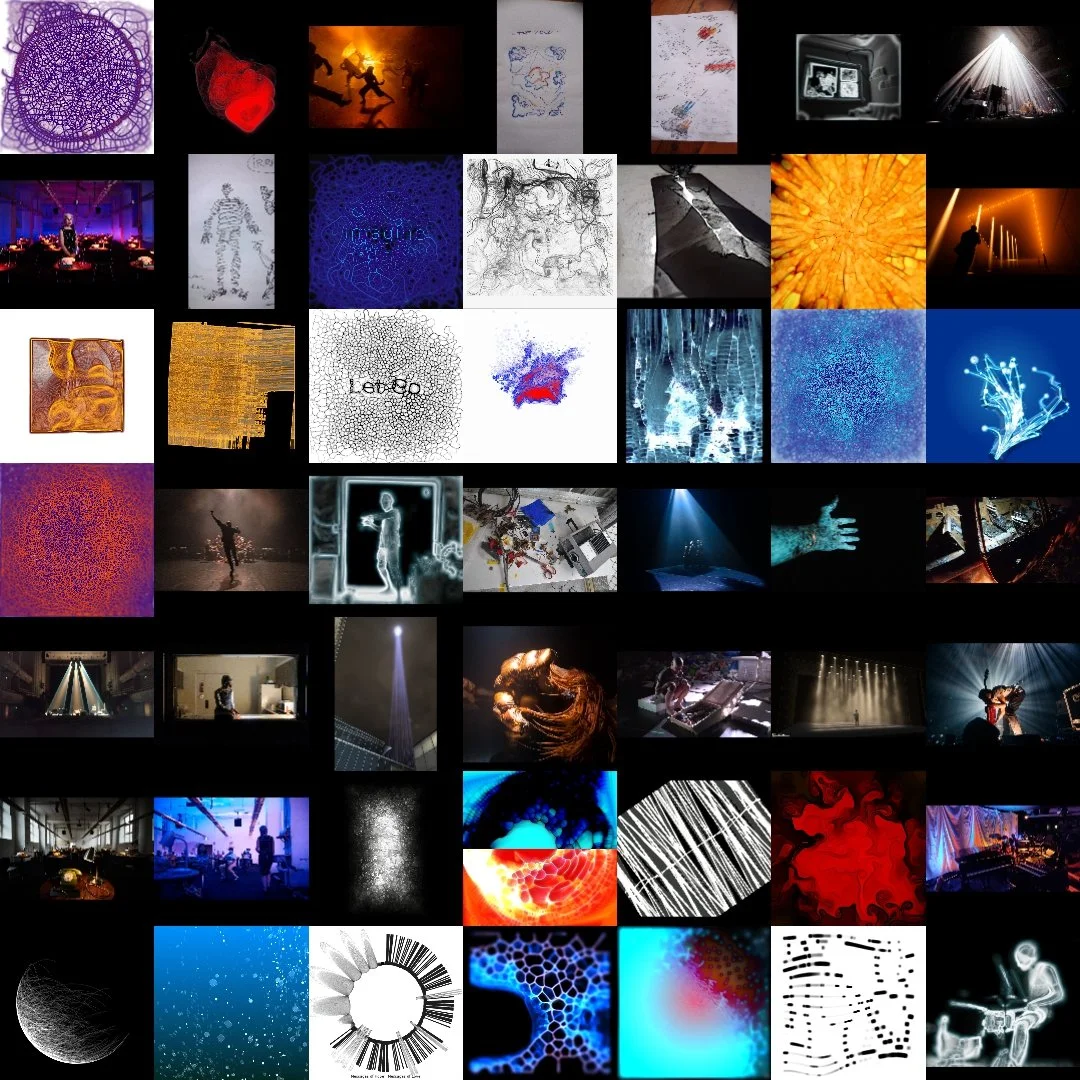

Collage (2022)

Colors, shapes, patterns. A layout of some of my work at a glance.

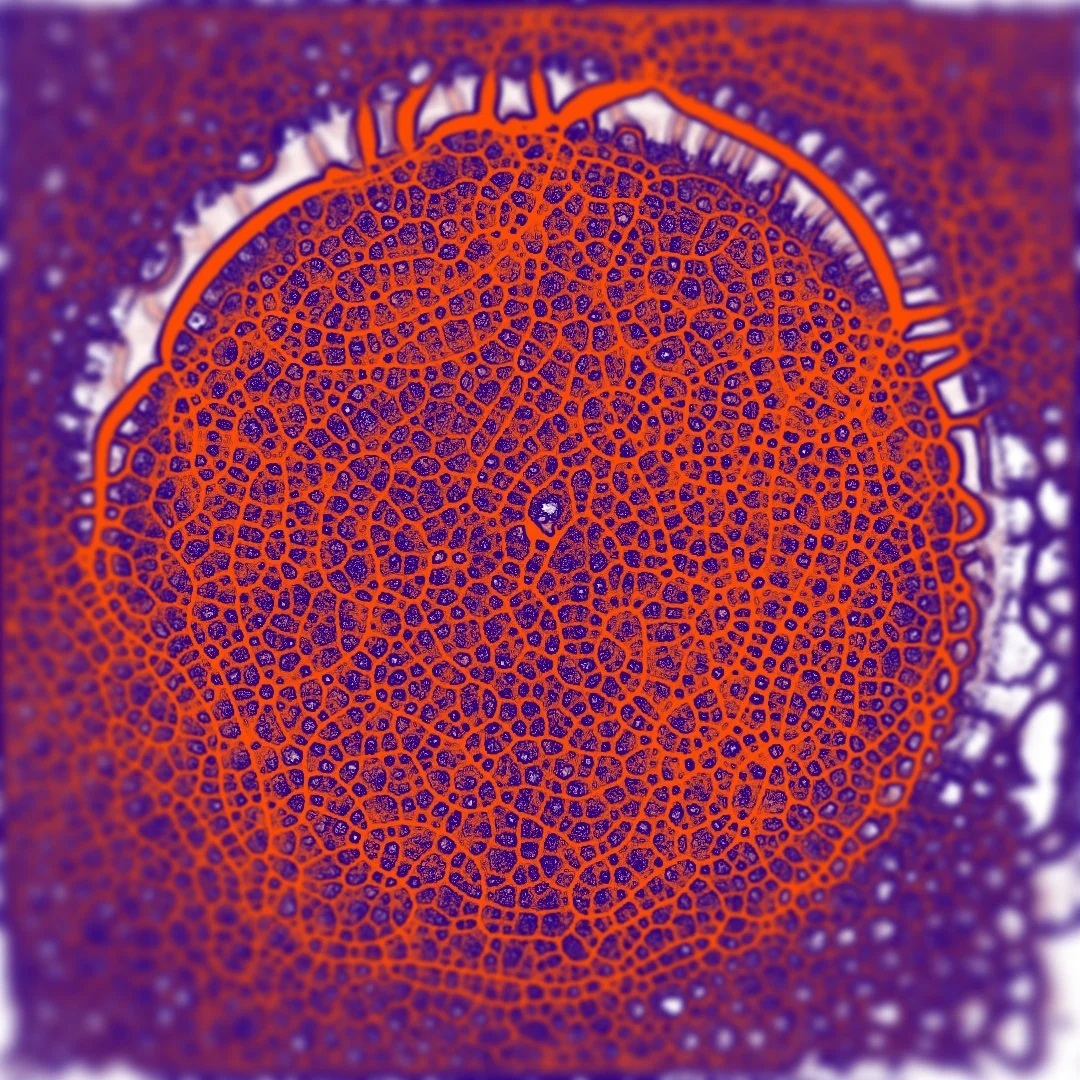

Visual Research on Organic Shapes and Movement (2021)

These images are an extract, part of a larger body of work of my visual research that occurred during 2021

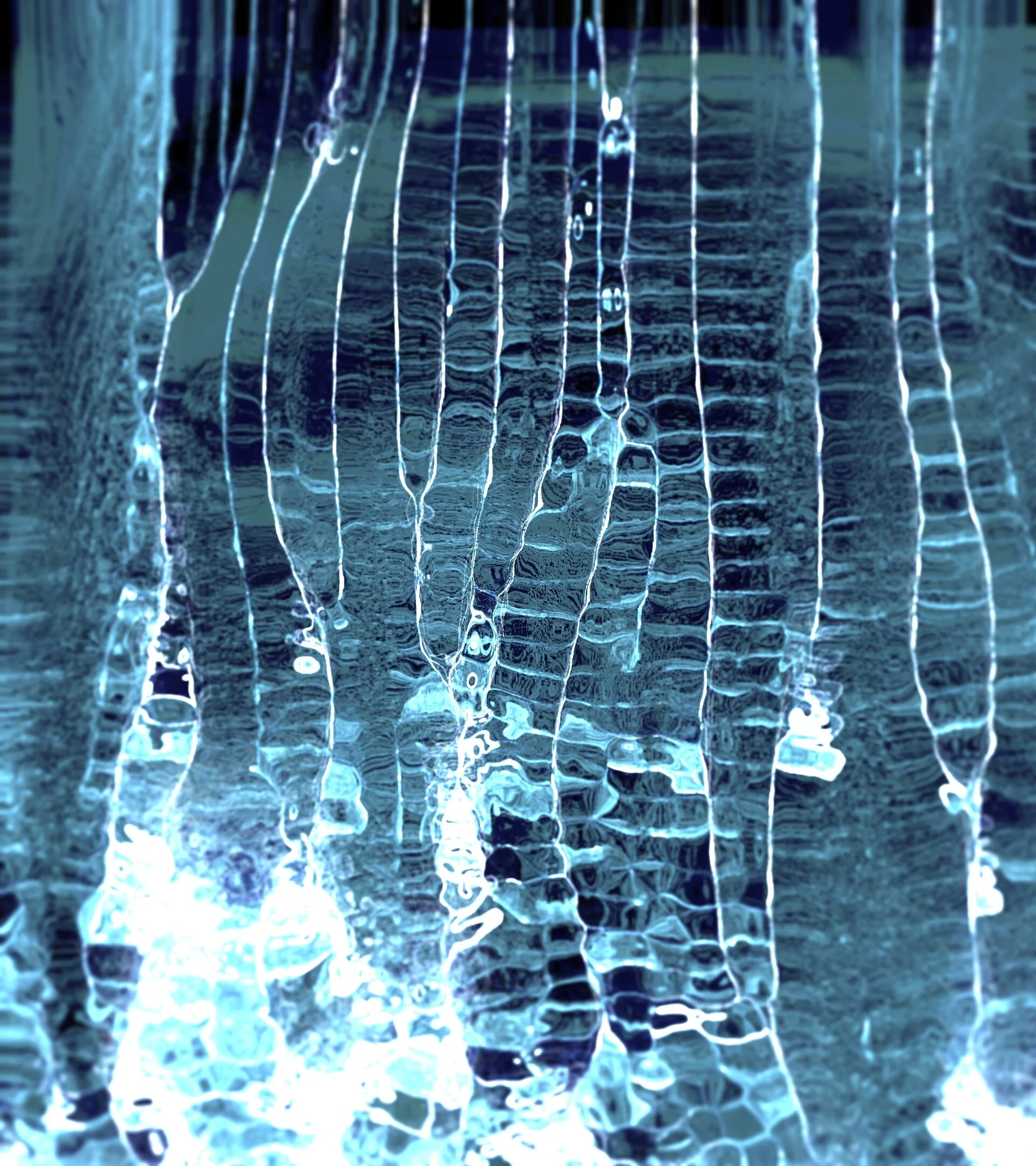

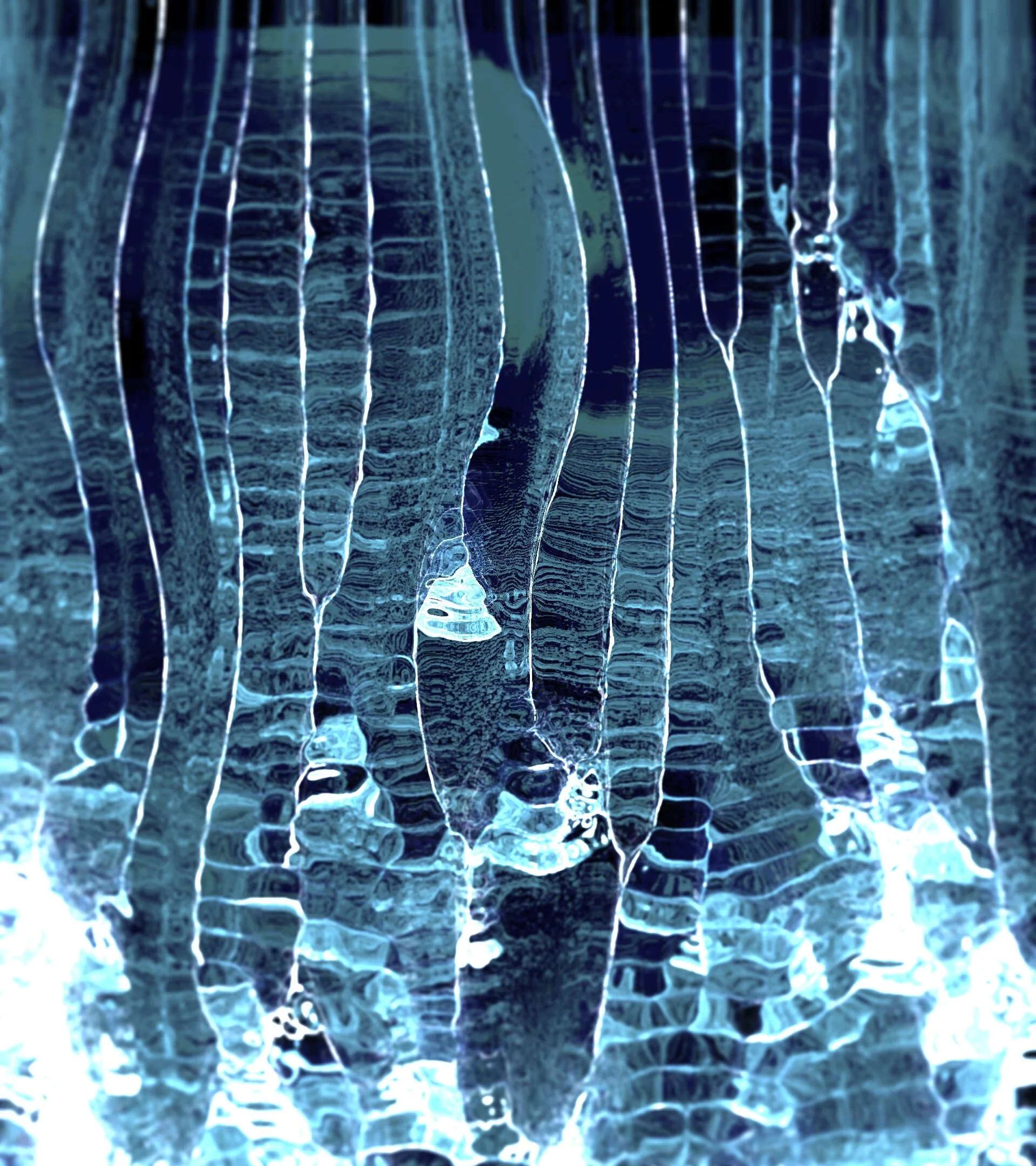

Visual Research on Water (Dec 2020)

Preliminary research on naturalistic water movement for an upcoming commissioned project.

Metal printed plated available on request

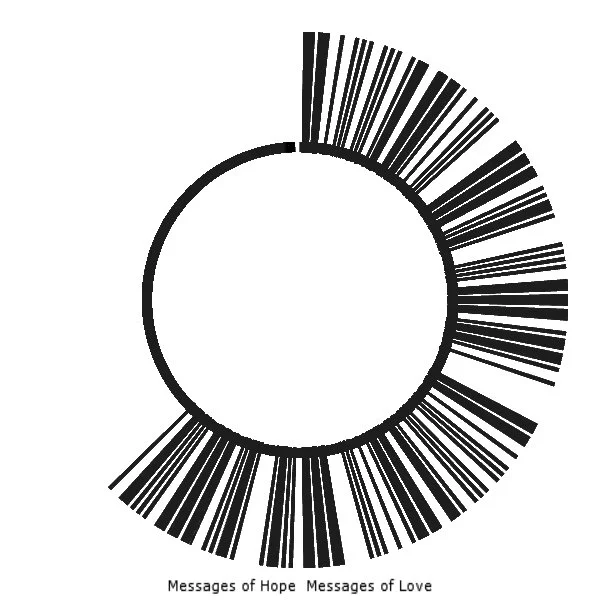

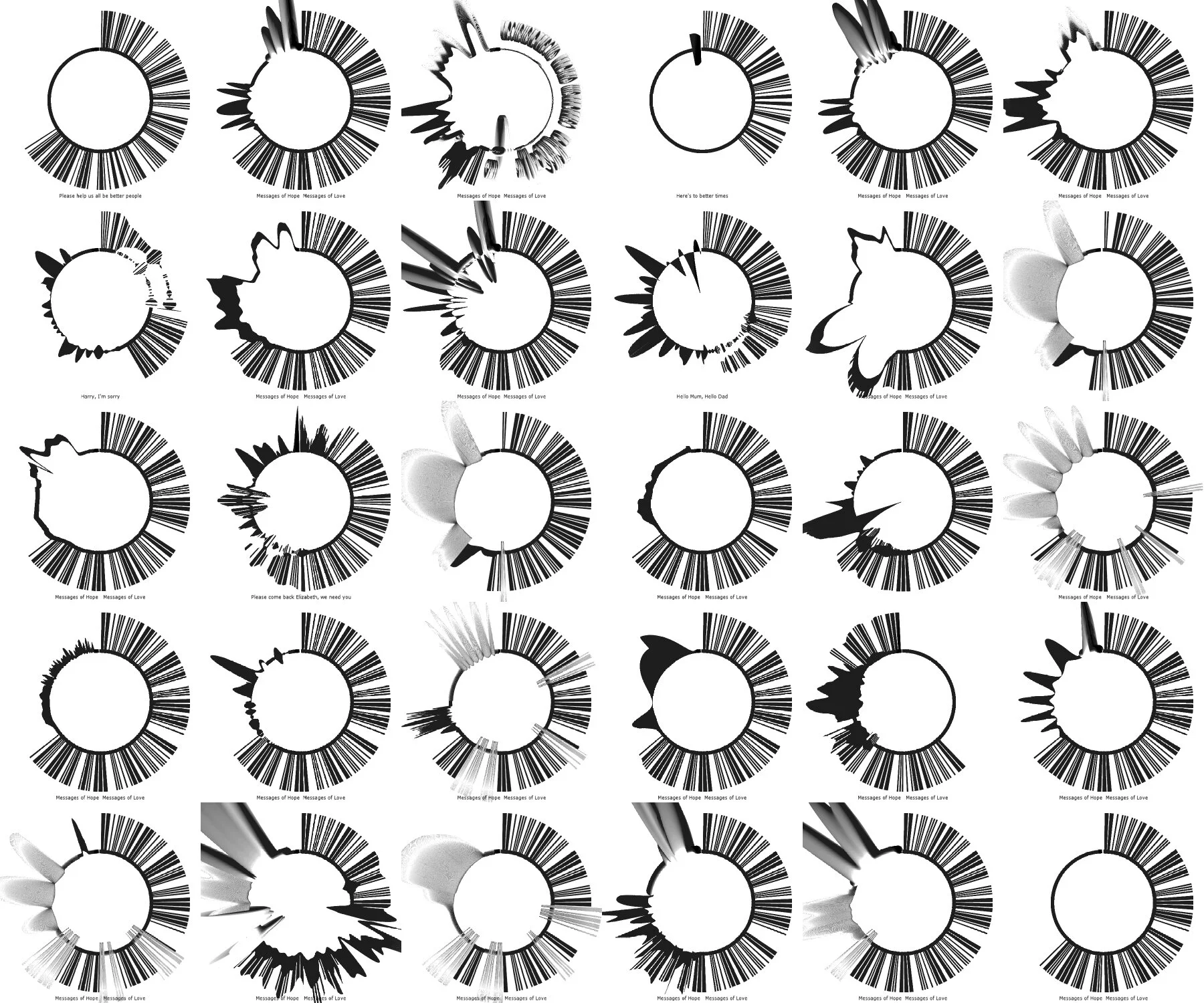

MoH MoL Data Visualization (Aug 2020)

Some screenshots of the data visualization I have made as part of the design and research for Messages of Hope Messages of Love.

Studies on Reflections (May 2020)

I often find myself captured by the reflections generated by common objects around the house. Recently I have started gathering the photographs and videos taken during these texture and lighting explorations.

This is part of an ongoing research on reflections that I have been slowly but steadily pursuing over the last four years.

Lighting / Set Design Concepts and 3D Renderings (Mar 2020)

Some screenshots of my latest lighting designs concept, and the philosophy behind it.

“… now more than ever it's important that we leverage the performing arts industry for what it is, and for what it can offer at its best: a live performance, happening in front of an audience, physically present to savour that event... every time a little different than the night before, every time a little unique... a story telling that grows and slightly changes every time it gets told, a live performance with all its imperfections and magic, unexpected moments. 3D renderings for me it's a communication tool that I recently discovered, a way to convey an idea, an image, a composition, a storyboard, a way to explore a set up with the rest of the creative team, away and well before cables and tight schedules are in place…”

Video Design and Depth Camera(Feb 2020)

This is a short clip from my second round of experiments based on a technique I have started developing in June 2018. Lately I had the chance to refine on this technique, finding a look, an aesthetic and a captivating result, that received a very positive response on the online creative coders community.

Video Design (Feb 2020)

Copper Plate (2020)

A digital, sound reactive video animation inspired by the reflective lighting quality of copper. Sound waves are applied to to the plate, manipulating its shape and texture.

Full video with sound available on request.

Mapping Wind Speed and Direction to Real Time Responsive Lighting Installation (December 2019)

First tests used as proof of concept for mapping wind speed and direction to control a lighting installation in real time.

This prototype was developed around the idea of designing an immersive environment, which blends digital, analog and sensorial elements to create a space that is connected to humans, while feeling at the same time somehow unreal, magic and evocative.

In this case, the wind that we perceive on our skin is mapped in real time to a responsive lighting installation, a designed environment, which augments and recreates this tactile, essential and unique weather condition.

Morse Code Testing (Nov 2019)

Testing a lighting effect, which will be part of Michaela Gleave’s upcoming exhibition.

The custom made software is translating any provided text into the equivalent on/off Morse Code pattern, which is then reproduced faithfully by the lighting fixture.

The hardware needed to run this installation was designed to be simple to use, small, standalone, plug & play and ready to go. The software was developed to be flexible and easy to be modified by the lead artist while installing the artwork.

The software was based on the code written by Rene Christen.

Visual Experiments (September 2019)

Sound and Generative Visual Experiments (August 2019)

Here below a couple of real time sound responsive visuals I have designed. Both tests were visually inspired by organic movements found in nature.

Fake Identities (May 2019)

Working on an app that generates fake identities.

As part of my research for an upcoming project I have started investigating and using coding for topics concerning privacy, personal data, and fake identities. The personal informations below are generated using an algorithm

Digital Self Portrait (May 2019)

A self portrait using generative coding.

Visualisation Tool for a Lighting Installation (May 2019)

First drafts of a pre-visualisation tool I am developing for a lighting installation. These effects have been generated and manipulated in real time and can control lighting LED strips

Prototype Surface Transducer Research (May 2019)

I have started some tests with transducer speakers, to be used in an installation I am devising in team with a composer. The surface transducer speakers can be installed against any solid surface (glass, timber, metal…) to reproduce sound in a wide variety of textures and qualities.

For testing purposes, this prototype was simply mounted on a piece of scrap timber. After the prototyping sessions the electronics will be neatly packaged in a small container.

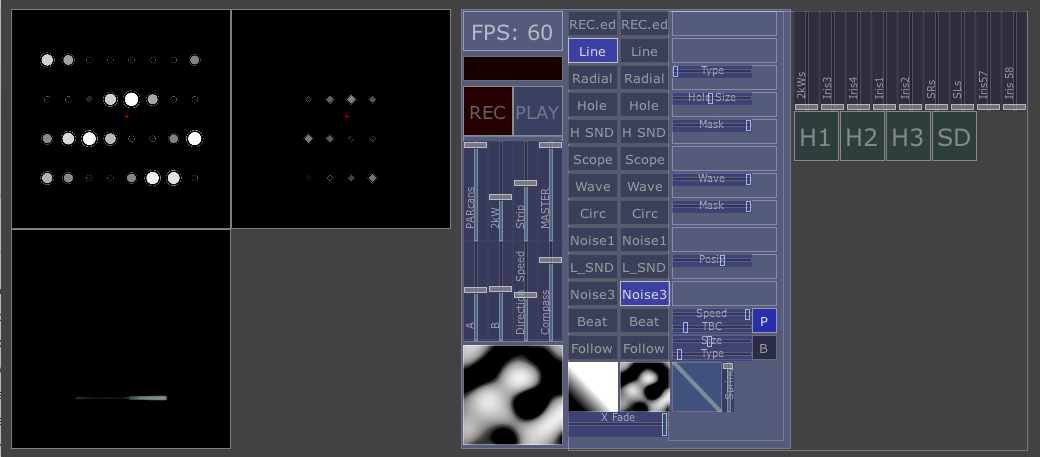

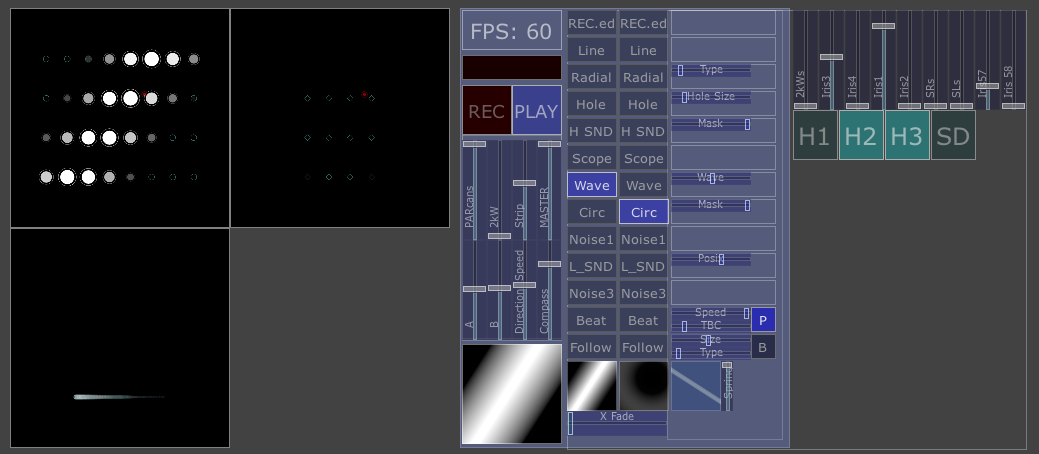

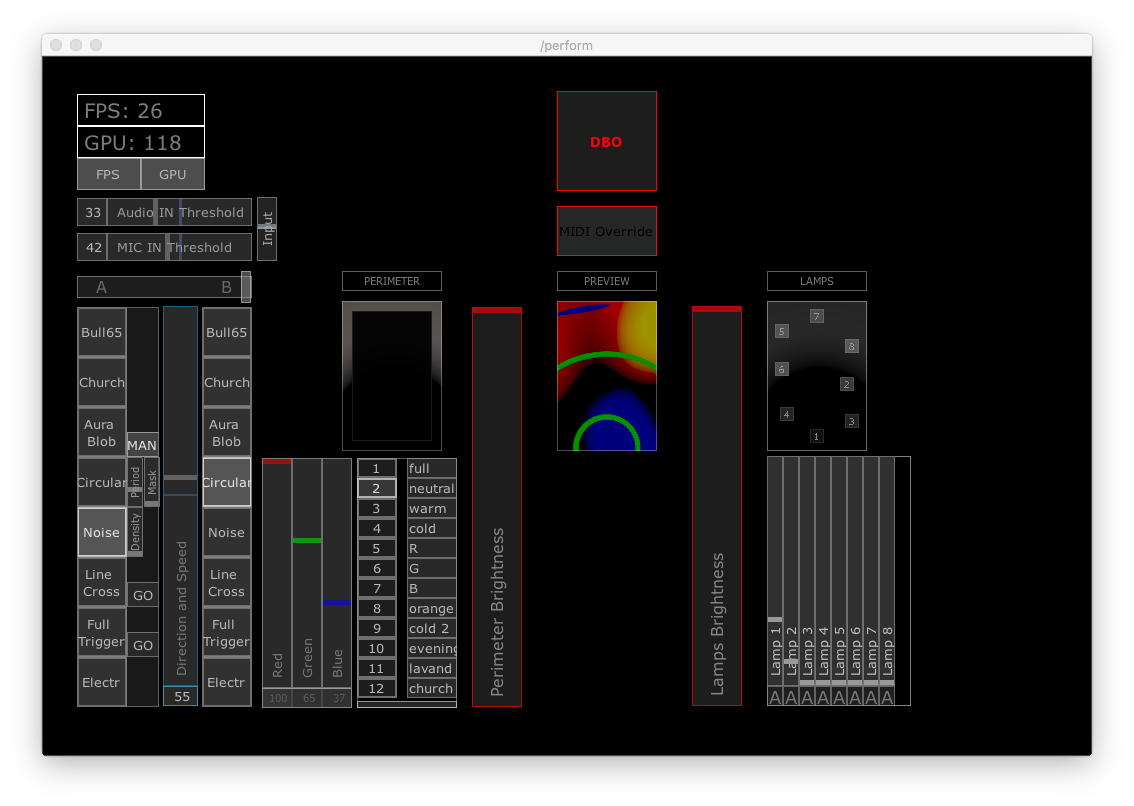

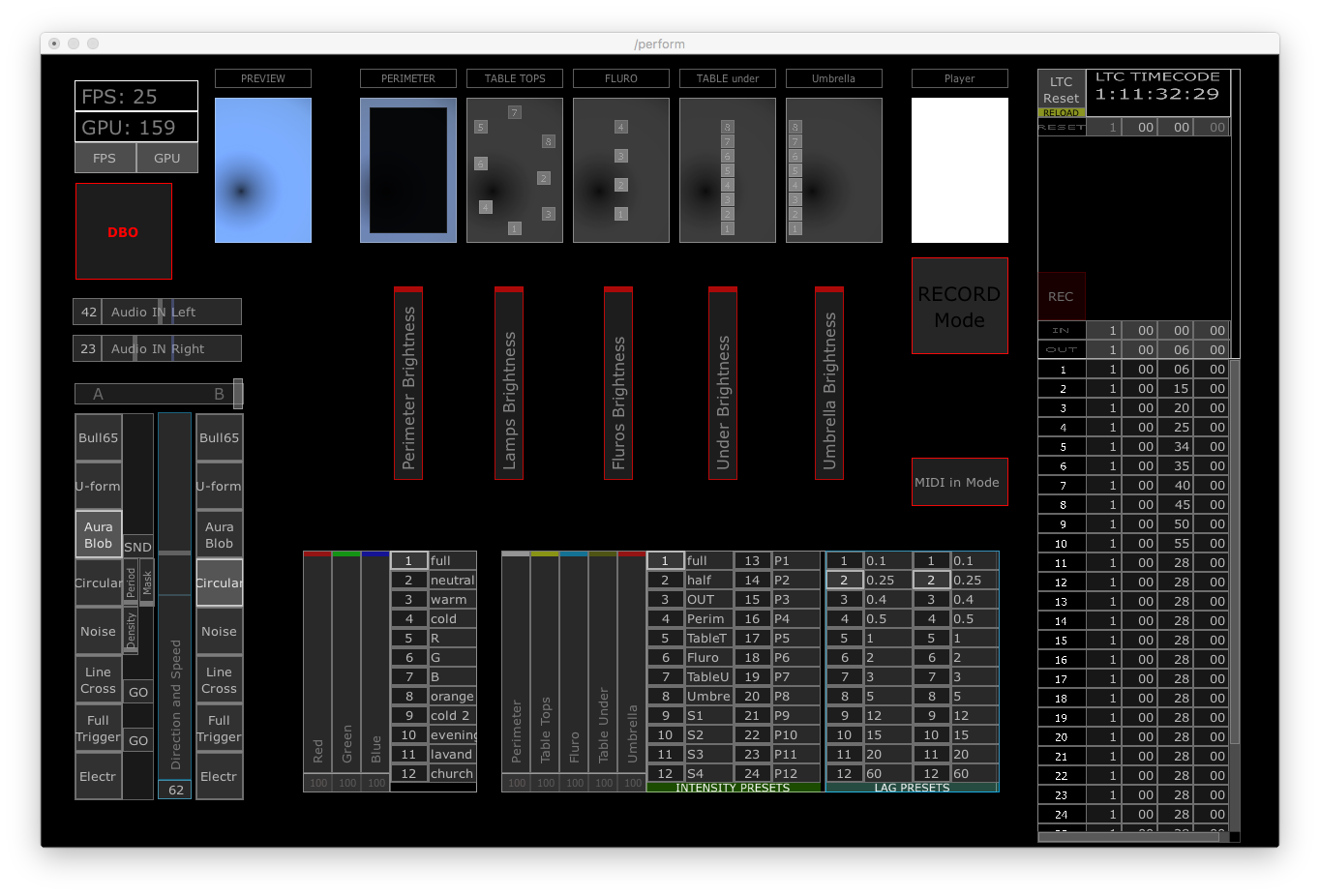

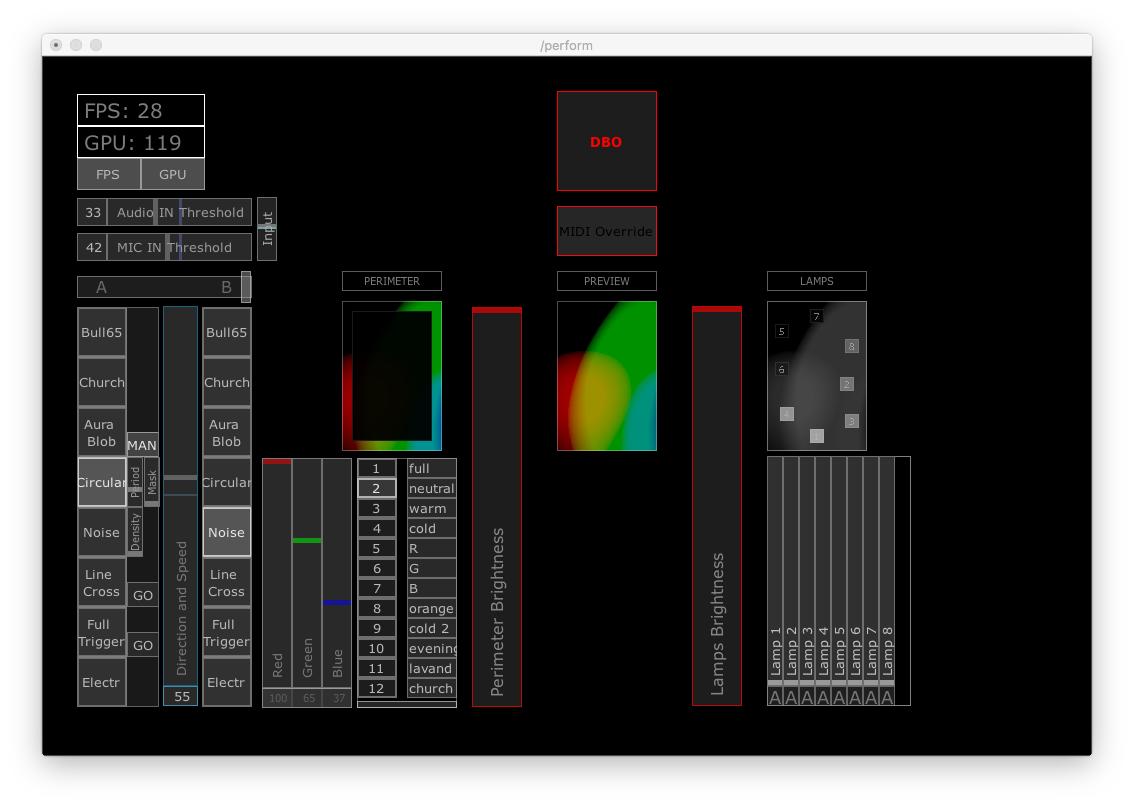

Custom Made Lighting Software and Interface (April 2019)

A couple of screenshots of the software I have developed to control my lighting design during a an artistic residency in April for a contemporary dance show. The lighting effects were produced and manipulated in real time, alongside being triggered live by several sound parameters, achieving unique lighting effects, cohesive and in complete sync with the live soundtrack.

This production has received very positive feedback and will be officially presented in the second half of 2020.

Volumetric Lights 1.0 (Nov 2018)

Initial tests on Volumetric Lights, a custom made software I have started developing and exploring in mid 2018. This software allows me to run 3D animations through a grid of lights installed in a physical space. This custom made software has also a visualisation tool, displayed in the screen-captures below. This is an indispensable feature that will help with the effects pre-programming and with discussing specific lighting solutions and overall look.

In the draft examples below, animations are drawn in a complex virtual lighting set up to control several parameters of each lighting fixture.

Interface for Live Control and Manipulation of Lighting Clusters (Oct 2018)

These are screenshots of an interface for a software I have developed to control clusters of lighting and other scenic effects.

This custom made software generates live video content, which is then mapped to lighting fixtures across the stage. producing unique effects with great dynamic range; from on-your-face digital type-of-look, all the way to subtle, minimal and organic.

Tailored versions of this software were then used and integrated in the lighting design of Obscene Madame D (2018) and the NightLine (2018)

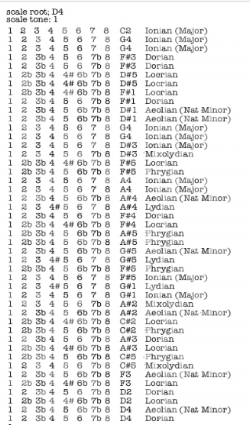

Ear Training App (Aug 2018)

This is a screenshot for an app I have developed for personal use. This app generates and play notes in different major scale modes, changing root note every two rounds, or anytime desired.

The purpose of this app is ear training and scales recognition for musicians. This app can be connected to any digital work station (like Ableton Live, Logic etc..) or any physical MIDI instrument (like keyboards, synth and so on ...), allowing to hearing the notes sound in real time.

The interface that I designed was intentionally kept to minimal, and similar to a typewriter document, with letters typed with uneven pressure, occasionally leaving some ink traces. The interface layout was inspired by receipts printers, still commonly used nowadays.

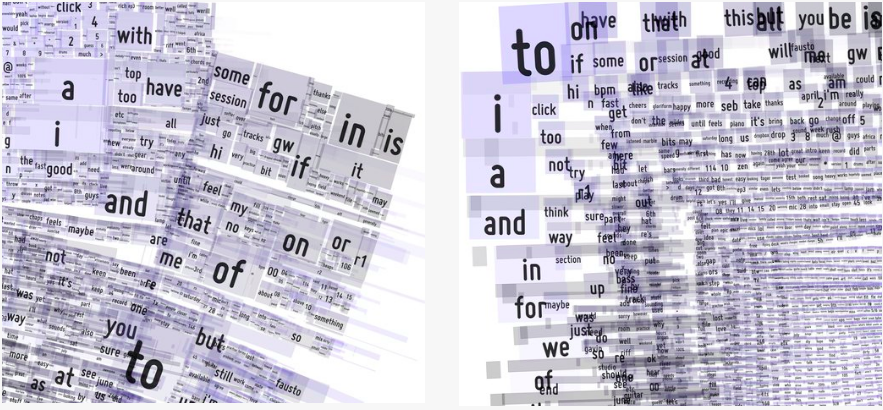

Prototypes ideas for a CD cover (June 2018)

The content of these compositions is generated by the words used in emails exchanged by the band members. The more recurrent a word is, the larger the rectangle containing that word is.

Modern music bands workflow often revolves around digital platforms, like shared Dropbox folders, Google Drive spreadsheets, shared calendars, and often relies on emails for both creative and production purposes. These digital platforms can become vital tools, and an integral part of the band creative process.

Further refinements to this idea will include a code implementation to omit certain prepositions and articles, in order to let more significant words to come to the surface.

Depth Camera (June 2018)

As part of my research, I have started investigating different uses and potentiality of a depth camera.

The depth camera is a device that is able to read bodies or objects, in relation to the physical position they occupy, regardless of the amount or type of light used on the subject itself.

For this research I have designed an app that can receive depth data from the camera, and manipulate in real time several key parameters such as shifting the distance of the depth to be read, and altering the depth of field itself.

The result is an highly engaging and inspiring platform for the performer, offering a unique and captivating aesthetic.

Choreographers and Performers: Alan Schacher, WeiZen Ho

AFTRS Advanced Lighting Skills Course (Feb 2018)

These stills were taken during a 3 days intensive course at AFTRS on lighting for camera., a workshop/class conducted by director of photography Steve Arnold.

Each day we were lighting several different scenes, covering a wide range of styles and techniques. Taking turns, each person attending the workshop had the chance to lead the lighting set up, seeking feedback and inputs from the rest of the group.

The same actors and set were used for all the scenes, but different lighting set up were discussed and built for each filming exercise.

Drama: Low Key, Low Contrast

Comedy: High Key, Low Contrast

Horror: Low Key, High Contrast

Drama: Low Key, High Contrast

Prototyping Shadow Places (Aug 2017)

First round of tests for Shadow Places, a large outdoor installation exhibiting video and textile art works, performances and lighting installations.

Part of the scope of these outdoor tests was to find solutions on how to disguise the lighting source while achieving the desired look.

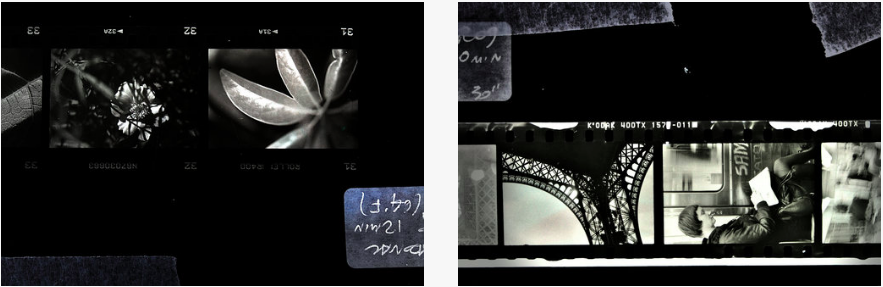

Black and White Photography (May 2017)

These photos are part of a larger collection of stills that were taken on film, then developed in a darkroom, experimenting on several different stages of the process.

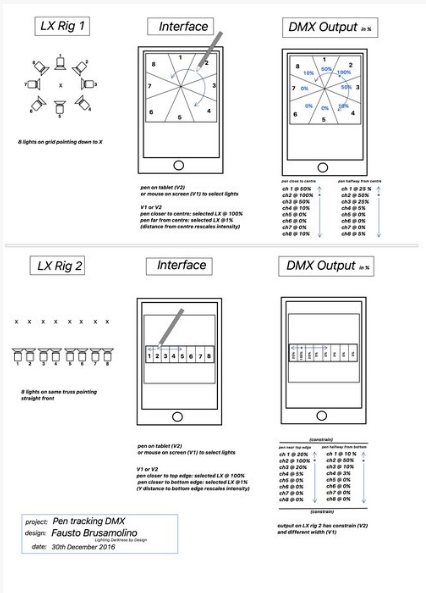

Interface Prototype for Interactive Lighting Control (March 2017)

This is a working prototype of a software to control lighting. The lighting intensity, and other lighting parameters if needed, can be manipulated by simply moving the mouse over the screen, in an intuitive and immediate way. This system could be used to control an array of down lights for example, to easily follow the position of a dancer on stage. Note how the lighting intensity decreases the further away they are from the centre of the circle. This type of visualisation provide an highly readable and pleasant look, maximised to facilitate the pre-design stage and live show operation. The larger the circle, the brighter will be that particular light. The number in the centre of each sphere provides a visual feedback to inform the designer about the intensity of each individual light, scaled to a familiar 0 to 100 range.

Interactive Lighting Control (Jan 2017)

This is the initial draft for an interactive lighting control over a cluster of lights.

This draft was then fully developed and used in 2017 in different configurations, and served as prototyping, testing, and lighting research tool. This initial prototypes were subsequently fully developed and used for the design and plotting of Obscene Madame D and The NightLine in 2018.

Animated Textures (Jan 2017)

Digital Textures created with coding softwares. These textures are computer generated with softwares using lines of code to achieve the desired effect. Some of this textures are inspired by motion that exists in nature, like the motion of leaves, fluids, air, where other textures leverage and gravitate towards the digital realm.

Self Portraits (2017)

Some of the self portraits created while experimenting on live camera, creative coding and lighting manipulation.

Research Material (2016)

Exploring custom lighting textures & materials overlay

Research Material (2015)

Overlaying materials, custom gobos and textures